LLMs are no longer futuristic tools. They’re already helping businesses respond to customers faster, summarize documents, and generate content at scale. And the growth is just getting started.

According to Grand View Research, the global LLM market was valued at $5.6 billion in 2024 and is expected to reach over $35.4 billion by 2030, growing at a CAGR of 36.9%. This rapid rise reflects the deep integration of LLMs into enterprise AI strategies.

But building or fine-tuning a model is only one part of the puzzle. Once deployed, you need to manage its behavior, track performance, version prompts, and ensure compliance. Without the right framework, things can quickly spiral out of control.

That’s where LLMOps comes in. It offers a structured way to manage every step of your LLM’s lifecycle, from development and testing to deployment and LLMOps monitoring. With the right LLMOps pipeline, you can scale AI confidently, reduce errors, and deliver consistent results across teams.

In this blog, you will learn what is LLMOps, how it’s different from MLOps vs LLMOps, and how to apply it in real enterprise environments using the right LLMOps tools for enterprises. For a deeper understanding, explore the complete LLM evaluation guide for enterprises to see how effective LLMOps frameworks enhance AI performance and governance.

What Is LLMOps?

LLMOps stands for “Large Language Model Operations.” It refers to a set of tools and workflows that help you manage every stage of the LLM lifecycle, from training and tuning to deployment and real-time monitoring.

What sets Enterprise LLMOps apart is its ability to handle the scale and complexity of language models used in enterprise environments. These models work with large, unstructured datasets and dynamic prompts. A small change in input or context can impact outcomes, so you need structured systems to track, test, and control behavior.

LLMOps fundamentals for enterprise adoption help you do just that by creating a shared operational layer between your AI, DevOps, and IT teams. It’s designed to support continuous improvement and reliable production performance.

With LLMOps, you can:

- Track prompts and model versions to ensure consistency and auditability.

- Monitor live outputs to detect issues like hallucinations, bias, or drift.

- Fine-tune models using domain-specific datasets with minimal disruption.

- Control access and ensure compliance across all stages of the LLMOps pipeline.

- Streamline workflows so non-technical roles can contribute to prompt design and testing.

These operations make scaling LLMs across departments possible, whether you’re in customer service, legal, finance, or HR.

According to Gartner, more than 30% of the increase in API demand by 2026 will come from LLM-powered tools. This shows how fast adoption is growing, and why you need reliable operations in place now.

LLMOps for enterprises doesn’t just help you manage LLMs. It helps you keep pace with change, reduce risk, and scale AI with confidence. Discover how small language models in AI development are shaping enterprise LLMOps strategies.

How Is LLMOps Different from MLOps? Key Differences Explained

You may already be using MLOps to manage traditional machine learning models. But LLMOps focuses on a different type of model and solves a more complex set of problems.

Machine learning models typically handle structured data and generate predictable outputs like scores, categories, or forecasts. In contrast, large language models work with unstructured text and produce open-ended responses. This shift introduces new requirements in terms of infrastructure, LLMOps monitoring, testing, and governance.

LLMOps builds on some of the concepts from MLOps, but it introduces more advanced systems to support the unpredictable and resource-heavy nature of LLMs. Let’s compare both across key areas:

| Category | MLOps | LLMOps |

| Model Type | Structured ML models | Foundation or generative LLMs |

| Data Handling | Tabular or labeled datasets | Unstructured inputs like text, PDFs, and code |

| Training Method | Frequent retraining using structured data | Fine-tuning or adapting pre-trained LLMs using prompts or specific examples |

| Development Layer | Feature stores, model registries, and data pipelines | Vector databases, embeddings, prompt engineering, and token limits |

| Infrastructure | Runs on CPUs or single GPUs | Requires distributed computing, GPU clusters, and memory optimization |

| Version Control | Tracks data, model weights, and scripts | Also includes prompt versions, tokenizers, context length, and embeddings |

| Testing and Release | Standard unit tests and metrics like accuracy or F1-score | Includes human evaluation, A/B tests, output scoring for factuality and toxicity |

| Observability | Focuses on model drift, data quality, and latency | Also tracks hallucinations, bias, coherence, relevance, and safety concerns |

| Security and Governance | Manages access, compliance, and fairness | Adds controls for prompt injections, PII leakage, model misuse, and audit trails |

| Use Cases | Forecasting, classification, scoring | Text generation, summarization, chat interfaces, and semantic search |

LLMOps is not just about scaling language models. It is about making sure those models are safe, accurate, and aligned with business goals over time. It includes a wider range of moving parts, from embedding stores to prompt templates, and often connects with third-party APIs and RAG pipelines, forming the basis of LLMOps architecture for large-scale deployments, as supported by advancements in LLM embeddings.

Another key difference lies in testing. Traditional ML outputs are often easy to validate through metrics. LLM outputs, however, are subjective and context-dependent. You need additional validation layers and human feedback to keep them on track – making this a vital part of LLMOps best practices 2026.

LLMOps also places a stronger focus on long-term performance. While MLOps may retrain models regularly to handle drift, LLMOps emphasizes post-deployment monitoring to track quality and prevent failure in production. This includes tracking prompt performance, managing updates from external APIs, and enforcing enterprise-level security using a robust LLMOps pipeline.

In short, MLOps vs LLMOps shows that while MLOps supports predictable models trained on structured data, LLMOps supports more flexible, creative models that need deeper oversight, stronger governance, and a different deployment strategy. This makes understanding the LLMOps fundamentals for enterprise adoption essential for scaling safely, especially when selecting LLMOps tools for enterprises, or evaluating the best LLMOps software for enterprise AI and enterprise-grade LLMOps platforms. For IT leaders exploring adjacent capabilities, it’s also worth reviewing practical AIOps use cases in IT operations to see how automation is driving efficiency across enterprise workflows.

Top Benefits of Implementing LLMOps in Enterprise AI Workflows

LLMOps gives you the structure needed to manage large language models reliably and at scale. It brings consistency across teams, helps reduce operational costs, and improves model performance, all while keeping your workflows secure and compliant. For enterprise environments, adopting Enterprise LLMOps ensures alignment with business goals and compliance needs.

1. Accelerated Deployment

LLMOps helps you move faster from development to production. By automating steps like prompt tuning, validation, and model packaging, you reduce manual intervention and speed up release cycles.

- Coordinate deployments across teams with a shared LLMOps pipeline.

- Track prompt changes, model versions, and outcomes in one place.

- Reduce delays with automated testing and rollback options.

This results in quicker rollouts for AI features across departments, without compromising quality. This approach is core to LLMOps fundamentals for enterprise adoption.

2. Resource and Cost Efficiency

Running LLMs at scale can quickly become expensive. LLMOps helps you manage this by optimizing how models are trained, deployed, and served.

- Apply efficient fine-tuning methods like LoRA to minimize GPU usage.

- Choose right-sized models for each use case to avoid overprovisioning.

- Monitor workloads to eliminate idle compute time.

You get better performance while keeping infrastructure and cloud costs under control using advanced LLMOps tools for enterprises.

3. Stronger Governance and Compliance

With growing regulatory pressure, LLMOps ensures your AI systems are transparent and accountable. It helps you trace how outputs are generated and who made changes at every stage.

- Maintain logs of model versions, inputs, and prompt templates.

- Control access to sensitive models and data.

- Ensure compliance with internal and industry-specific standards.

This governance is critical in Enterprise-grade LLMOps platforms, especially for BFSI, healthcare, and legal teams managing sensitive information, and aligns with the LLMOps framework for enterprise applications.

4. Real-Time Monitoring and Risk Mitigation

LLMOps offers tools to monitor model behavior in production, so you can quickly catch issues that affect reliability or trust.

- Detects model drift or inconsistencies in generated content.

- Set up alerts for hallucinations, bias, or latency spikes.

- Use dashboards to track performance across environments.

These safeguards help protect both customer experience and brand credibility. These practices align with LLMOps best practices 2025 and enable proactive action in line with MLOps and LLMOps strategies for enterprises.

5. Aligned, Cross-Functional Collaboration

Enterprise AI projects involve more than just data scientists. With LLMOps, your data, IT, and product teams can work in sync on shared goals.

- Collaborate on prompts, experiments, and release plans.

- Centralize feedback and documentation for better decision-making.

- Standardize processes across departments using LLMs.

This improves efficiency while keeping your deployments aligned with business needs. This collaboration is vital in LLMOps vs MLOps for enterprise AI teams, ensuring agility and alignment across teams.

6. Scalable Model Management

As your AI footprint grows, so does the need to manage models across different teams and tasks. LLMOps simplifies scaling by offering:

- Central prompt and model repositories.

- Support for multi-model deployments with versioning.

- Integration with CI/CD pipelines to automate updates.

Whether you’re expanding across support, sales, or operations, LLMOps ensures your systems scale without friction.

Also Read: How Large Language Models Transform Enterprise Workflows

How to Successfully Implement LLMOps in Large-Scale Enterprises

Getting LLMs to work well across your business needs more than just a working model. You need a clear setup that connects your people, processes, and tools. LLMOps helps you do that by giving structure and control to your AI efforts.

1. Set Up Clear, Repeatable Workflows

Start by laying out each step in the model’s journey. This includes writing prompts, tuning, testing, and releasing. Make these steps easy to repeat so teams can follow a shared path.

- Use templates for prompts, testing, and reviews.

- Keep tasks modular so they can be reused or improved over time.

This approach brings consistency and makes it easier to expand across teams using Enterprise LLMOps.

2. Track Changes with Version Control

LLM systems involve many parts that change often. It’s important to track them properly.

- Save different versions of prompts, datasets, and retrieval rules.

- Keep a log of outputs used for testing or fine-tuning.

Tools like MLflow or Weights & Biases can help manage this. Tracking changes gives you the ability to trace issues, roll back mistakes, and maintain quality with LLMOps tools for enterprises.

3. Build CI/CD Pipelines for Safe Updates

When models or prompts are updated, errors can creep in. Automating your release process helps avoid that.

- Run tests on prompts before making changes live.

- Check for bias, unsafe responses, or poor results.

- Set rules to stop updates if tests fail.

This setup ensures your changes are reviewed and safe before reaching users, aligning with a structured LLMOps pipeline approach.

4. Monitor Output and Performance

After deployment, you need to watch how the model behaves. It’s not just about speed, but also about response quality and usefulness.

- Measure latency and user satisfaction.

- Spot drift or unexpected answers early.

- Use alerts for low-confidence or strange results.

Ongoing LLMOps monitoring helps you keep quality high and fix problems before they affect users.

5. Apply Security and Access Controls

When models deal with customer data or sensitive business content, access control is key.

- Limit who can change prompts or models.

- Keep detailed logs of actions taken.

- Protect data through encryption and restricted access.

This aligns with LLMOps best practices 2025, especially in regulated enterprise settings.

6. Connect LLMs with Business Tools

LLMs are more useful when they work with your other tools. These might include CRMs, helpdesks, or knowledge platforms.

- Use APIs to send and receive data from systems you already use.

- Keep input and output formats consistent.

- Allow different teams to tap into the same model logic.

This saves effort and supports faster adoption across departments. This enables seamless integration using enterprise-grade LLMOps platforms.

7. Start Small and Scale Gradually

Pick one use case that solves a real problem. This could be summarizing support tickets or answering internal questions.

- Test it with a small group first.

- Track results and gather feedback.

- Use the findings to guide your next rollout.

This method supports scalable adoption and reflects real-world LLMOps vs MLOps for enterprise AI teams scenarios. Once your LLMOps setup is in place, the next step is to apply it across business functions.

Enterprises across industries are already using LLMs to support teams in customer service, HR, sales, legal, and more. But without LLMOps, these use cases can become hard to maintain or scale.

Also Read: What Are LLM Embeddings and How Do They Revolutionize AI?

Common Use Cases of LLMOps for Enterprise AI Agents and Automation

LLMOps supports a wide range of AI-powered tasks inside your organization. When models are properly managed, tested, and tracked, they can bring measurable results in many areas. Below are some of the most common use cases powered by Enterprise LLMOps and aligned with LLMOps fundamentals for enterprise adoption.

1. AI Agents for Customer Support

LLMs can power virtual agents that handle customer questions, pull history from tickets, and know when to escalate.

- Respond to common queries across chat and email.

- Personalize replies using CRM data.

- Escalate to a human when needed.

LLMOps helps keep these agents reliable by tracking performance, managing prompt updates, and maintaining consistency through LLMOps tools for enterprises.

2. Knowledge Assistants with RAG

Retrieval-Augmented Generation(RAG) lets LLMs give accurate answers based on your documents and data.

- Search across SOPs, manuals, and internal wikis.

- Answer employee or customer questions using verified content.

- Include source links with each answer.

LLMOps makes it easy to manage the retrieval setup, monitor performance, and ensure helpful, factual responses – critical elements of LLMOps architecture for large-scale deployments.

3. Helpdesk Automation for HR and IT

Internal teams often deal with repeated questions. LLMs can help by offering clear and consistent answers.

- Guide employees through password resets, device issues, or policy lookups.

- Share links to relevant documents or forms.

- Hand over complex queries to support teams when needed.

With LLMOps pipeline management and LLMOps monitoring, you can adapt answers over time and ensure the system stays up to date with policy changes.

4. Sales and Marketing Support

LLMs can help generate content, pull insights, or organize customer information.

- Create custom emails based on CRM data.

- Turn product features into customer-facing copy.

- Summarize user feedback for product teams.

LLMOps ensures outputs remain accurate and aligned with brand voice, while enabling regular prompt updates through the best LLMOps software for enterprise AI.

5. Legal and Compliance Document Review

Legal teams can use LLMs to review contracts, compare documents, or highlight key sections.

- Identify important clauses and risks.

- Check compliance with company policies.

- Produce short summaries for internal teams.

LLMOps adds control to this process by versioning prompts, logging outputs, and allowing human review – essential to meet standards in LLMOps best practices 2025 and support MLOps and LLMOps strategies for enterprises.

How Wizr AI’s Platform Enables Secure, Scalable, & Governed LLMOps Deployment

Managing LLMs across your enterprise takes more than access to a model. You need the right systems to ensure security, visibility, control, and ease of use. WIZR enables Enterprise LLMOps success through our platform combined with platform-enabled AI services.

It provides a unified AI environment that helps organizations deploy, monitor, and scale LLM-powered applications securely across the enterprise. Instead of managing separate tools or building complex integrations, Wizr simplifies LLMOps adoption with a platform designed for enterprise workflows and governance.

Here’s how WIZR supports every layer of LLMOps:

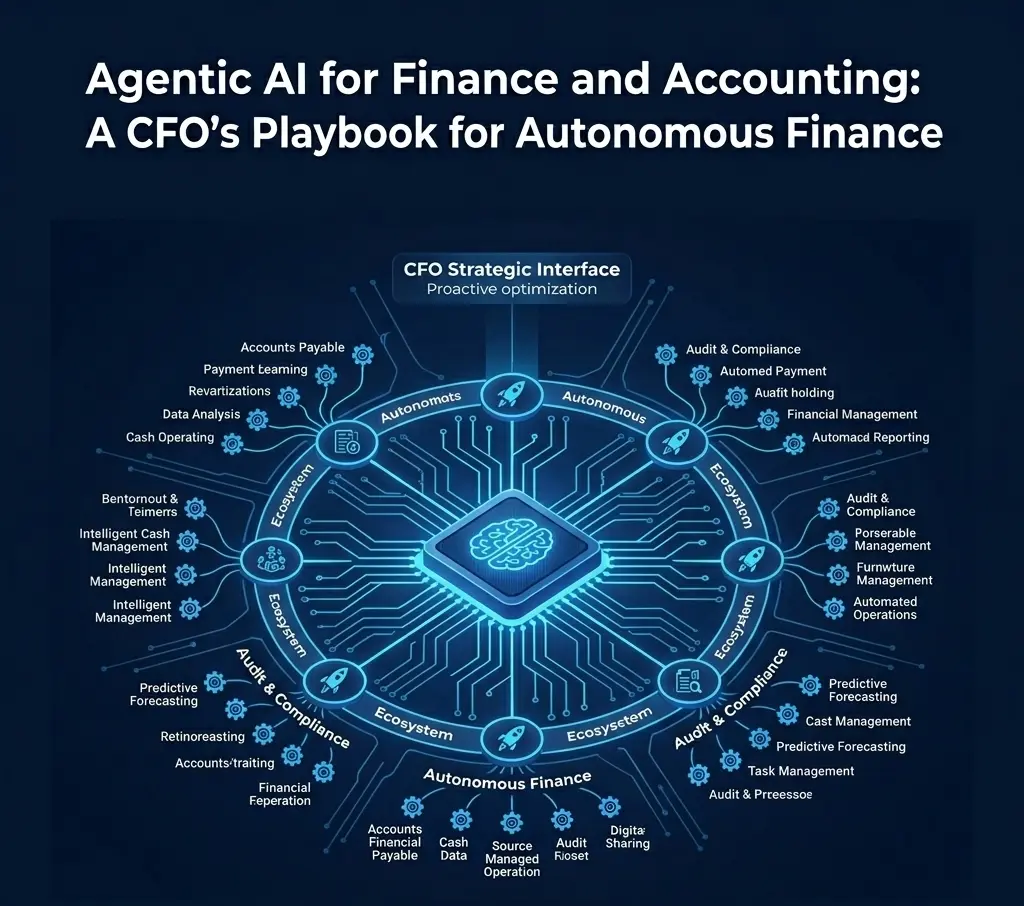

- Prebuilt and Configurable AI Agents: Wizr provides ready-to-deploy agents for Customer Support, IT Support Management, and Finance & Accounting. These agents are configurable to match enterprise workflows, helping teams launch automation quickly without starting from scratch.

- Secure RAG Integration with Your Data: Using Retrieval-Augmented Generation, Wizr allows LLMs to connect with enterprise systems such as CRMs, knowledge bases, and databases. Responses remain grounded in trusted internal data, improving accuracy and enabling secure enterprise AI deployment.

- Built-in Observability Tools: Our platform includes dashboards to monitor usage, output quality, and system performance. These insights help teams track model behavior in production and maintain reliable AI operations.

- Governance and Compliance Controls: Wizr supports enterprise-grade security practices, including role-based access controls, monitoring, and audit visibility. These capabilities help organizations maintain data governance and meet compliance requirements for enterprise AI.

- LLMOps Features Built In: Features such as prompt management, testing environments, and deployment controls help teams iterate safely and manage LLM-powered applications across development and production environments.

- Unified AI Environment for Enterprise Use: From AI agents and workflows to model-driven applications, our platform provides a centralized environment for building, managing, and scaling enterprise AI solutions.

Wizr AI helps organizations manage LLM-powered applications in a way that is secure, scalable, and operationally reliable. Whether teams are automating support processes, improving internal workflows, or deploying AI agents for complex tasks, Wizr combines our platform with enterprise AI services to support production-ready AI adoption and evolving enterprise LLMOps strategies.

Conclusion

As more enterprises adopt large language models, managing them effectively is becoming a serious challenge. You might be dealing with inconsistent outputs, prompt confusion, compliance risks, or a lack of visibility across teams. Without a proper LLMOps framework for enterprise applications, these issues can slow progress and increase costs. To scale LLMs with confidence, you need a reliable system that brings order, control, and accountability to every stage of your AI operations – highlighting the importance of LLMOps fundamentals for enterprise adoption.

Wizr helps you do exactly that. Our platform, combined with platform-enabled AI services, supports secure, scalable, and governed enterprise LLMOps deployments. With pre-built AI agents, secure data integration, real-time monitoring, and built-in governance, Wizr provides the tools enterprises need to deploy and manage LLM-powered applications across teams with speed and confidence.

With pre-built AI agents for Customer Support, IT Support Management, and Finance & Accounting, Wizr helps organizations move from experimental AI use to enterprise-wide impact while supporting broader MLOps and LLMOps strategies.

Build smarter AI workflows. Let Wizr help power your LLMOps pipeline and accelerate your journey toward production-ready enterprise AI.

FAQs

1. What is LLMOps, and why is it important for enterprises?

LLMOps (Large Language Model Operations) is the process of managing the full lifecycle of large language models covering deployment, monitoring, tuning, and governance.

It’s important for enterprises because LLMs handle unstructured data, need more compute resources, and require strict oversight to prevent errors or risks. A structured enterprise-grade LLMOps platform ensures models remain reliable, scalable, and compliant.

With Wizr AI, enterprises gain built-in observability, secure deployments, and versioning through our platform and AI services, making LLMOps implementation simpler from day one.

2. How is LLMOps different from MLOps?

The key difference: MLOps manages traditional ML models, while LLMOps is built for generative AI models like GPT or LLaMA.

- MLOps → works best for structured datasets

- LLMOps → focuses on prompts, embeddings, hallucination control, and token limits

For enterprises, this means LLMOps addresses the unique operational and governance challenges of generative AI at scale. Wizr AI helps organizations implement these practices using our platform and AI-enabled workflows that support secure enterprise AI adoption.

3. What are some real-world use cases of LLMOps in enterprises?

LLMOps enables AI deployment across multiple business functions, such as:

- Customer service: instant support ticket resolution

- HR & IT: automated helpdesk operations

- Legal: faster contract analysis

- Sales: personalized email and proposal generation

These use cases require enterprise-level governance, monitoring, and version control. Wizr AI supports these capabilities through our platform and configurable AI agents, enabling enterprises to deploy and manage AI-driven workflows securely.

4. What are the key components of a scalable LLMOps architecture?

A robust LLMOps architecture should include:

- Prompt and version management

- Real-time performance monitoring & drift detection

- CI/CD pipelines for model updates

- Role-based access controls & audit logs

- Security and governance policies

These components help ensure LLM systems run securely and reliably in enterprise environments. Wizr AI supports these capabilities through our platform combined with AI services that help design, deploy, and manage AI workflows at scale.

5. How do I get started with LLMOps in my organization?

Begin small start with one use case like automating customer support tickets. Then expand gradually.

Steps to follow:

- Build clear workflows

- Apply version control on prompts and models

- Monitor performance continuously

- Ensure role-based security and governance

Enterprises can accelerate adoption with Wizr AI, leveraging our platform and enterprise AI services to implement and scale LLM-driven automation from pilot to production.

6. How does support for enterprise teams differ between AIops and generic LLM APIs?

AIops platforms provide monitoring and automation for IT operations, while generic LLM APIs only handle text generation. For enterprises, LLMOps adds another layer—managing prompts, compliance, monitoring hallucinations, and ensuring governance.

With Wizr AI, teams benefit from enterprise-ready observability, integrations, and AI workflow orchestration through our platform and services, enabling safer and more scalable enterprise adoption than relying on standalone APIs.

7. What are the best practices for LLMOps in enterprises (2026)?

The best LLMOps practices for enterprises in 2026 include:

- Using prompt versioning and rollback for reliability

- Continuous monitoring for bias, drift, and hallucinations

- Secure governance with role-based access

- Optimizing cost with efficient model usage

- Building scalable CI/CD pipelines for model updates

Enterprises adopting these LLMOps best practices ensure generative AI applications are secure, compliant, and scalable. Wizr AI helps organizations implement these practices using our platform and AI services, enabling enterprises to operationalize AI without heavy infrastructure overhead.

About Wizr AI

Wizr AI helps enterprises build autonomous operations and accelerate software delivery with practical, production-ready AI. Our secure, modular platform enables teams to build, govern, and scale AI agents and intelligent workflows across Customer Support, IT Support Management, and Finance & Accounting. Through AI-powered engineering services, Wizr also helps organizations accelerate software development and modernization. With pre-built and configurable AI agents, along with enterprise-grade security and integrations, Wizr makes it easy to move from pilot to production with real business impact.

See how Wizr AI can help your teams move faster. 👉 Get in touch.

![Agentic AI vs AI Agents: Key Differences Every CIO Must Know [2025 Guide]](https://wizr.ai/wp-content/uploads/2025/07/Agentic-AI-vs-AI-Agents.webp)

![Agentic AI vs Traditional Automation: Why Enterprises Shift for Better CX [2025]](https://wizr.ai/wp-content/uploads/2025/06/Agentic-AI-vs-Traditional-Automation.webp)

![11 Real-World AI Agents Examples + Use Cases for Enterprises [2025]](https://wizr.ai/wp-content/uploads/2025/05/AI-Agents-Examples-Use-Cases.webp)